Identity columns are widely used as primary key columns in SQL Server tables. They can be one of the data types TINYINT, SMALLINT, INT, BIGINT, NUMERIC or DECIMAL. Each of these data types has an upper limit and when that upper limit is reached, an error is raised and further inserts into the table are impossible.

The error that is raised looks like the following:

[red_box]

Msg 8115, Level 16, State 1, Line 7

Arithmetic overflow error converting IDENTITY to data type tinyint.

Arithmetic overflow occurred.

[/red_box]

Max allowed values for the accepted data types are:

| Data Type | Max Value |

| TINYINT | 255 |

| SMALLINT | 32767 |

| INT | 2147483647 |

| BIGINT | 9223372036854775807 |

| DECIMAL/NUMERIC | 99999999999999999999999999999999999999 |

For decimal/numeric data types, the max value (38 digits) results from the max precision when the scale is 0, that is NUMERIC(38,0). If you try to use a scale other than 0, you’ll get:

[red_box]

Msg 2749, Level 16, State 2, Line 10

Identity column ‘Id’ must be of data type int, bigint, smallint, tinyint, or decimal or numeric with a scale of 0, and constrained to be nonnullable.

[/red_box]

List columns that may overflow

So. Here is a script that will iterate through all databases on an instance and list all columns that are about to overflow. The exact definition of “about to overflow” is that the current identity value is more than 90 % of the max for the data type. But you can easily change that in the next to last row in the script.

The script is based on SQL Server metadata, so it is not particularly heavy to run. But test it first.

IF OBJECT_ID('tempdb..#OverflowColumns') IS NOT NULL BEGIN

DROP TABLE #OverflowColumns

END

CREATE TABLE #OverflowColumns

(

DatabaseName SYSNAME NOT NULL,

TableName SYSNAME NOT NULL,

ColumnName SYSNAME NOT NULL,

DataType SYSNAME NOT NULL,

IdentCurrent NUMERIC(38,0) NULL,

PercentOfMax NUMERIC(10,2) NULL

)

EXECUTE sp_MSforeachdb 'USE [?]

;WITH CTE_DT AS

(

SELECT C.object_id, S.name + ''.'' + SO.name AS TableName, C.name AS ColumnName, C.user_type_id, C.precision, T.name AS DataType, IDENT_CURRENT(S.name + ''.'' + SO.name) AS IdentCurrent

FROM sys.columns C

JOIN sys.objects SO

ON C.object_id = SO.object_id

AND SO.type = ''U''

JOIN sys.schemas S

ON SO.schema_id = S.schema_id

JOIN sys.types T

ON C.user_type_id = T.user_type_id

WHERE C.is_identity = 1

)

,CTE_DT2 AS

(

SELECT object_id, TableName, ColumnName, DataType, IdentCurrent,

CASE

WHEN user_type_id = 48 THEN IdentCurrent / 255

WHEN user_type_id = 52 THEN IdentCurrent / 32767

WHEN user_type_id = 56 THEN IdentCurrent / 2147483647

WHEN user_type_id = 127 THEN IdentCurrent / 9223372036854775807

WHEN user_type_id IN (106,108) THEN IdentCurrent / CAST(REPLICATE(''9'', precision) AS NUMERIC(38,0))

END AS PercentOfMax

FROM CTE_DT

)

INSERT #OverflowColumns (DatabaseName, TableName, ColumnName, DataType, IdentCurrent, PercentOfMax)

SELECT DB_NAME(), TableName, ColumnName, DataType, IdentCurrent, PercentOfMax

FROM CTE_DT2

WHERE PercentOfMax > 0.9

'

SELECT * FROM #OverflowColumns

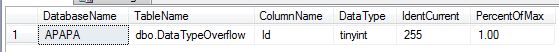

The results might look like this for a tinyint identity column that is about to overflow since the current identity value is 255:

If you find columns that are about to overflow, you are very lucky if they aren’t part of a primary- foreign key relation. If they are, and you try to change the data type, you may run into the following errors:

[red_box]

Msg 5074, Level 16, State 1, Line 18

The object ‘pkId’ is dependent on column ‘Id’.

[/red_box]

[red_box]

Msg 4922, Level 16, State 9, Line 18

ALTER TABLE ALTER COLUMN Id failed because one or more objects access this column.

[/red_box]

How to solve that problem depends heavily on your system, but perhaps one method would be to drop all constraints before the data type change and then re-create constraints. But again, that depends on if it is safe to do that in your system.